Adding BatchedNMSDynamic_TRT plugin in the ssd mobileNet onnx model - TensorRT - NVIDIA Developer Forums

GitHub - brokenerk/TRT-SSD-MobileNetV2: Python sample for referencing pre-trained SSD MobileNet V2 (TF 1.x) model with TensorRT

GitHub - chenzhi1992/TensorRT-SSD: Use TensorRT API to implement Caffe-SSD, SSD(channel pruning), Mobilenet-SSD

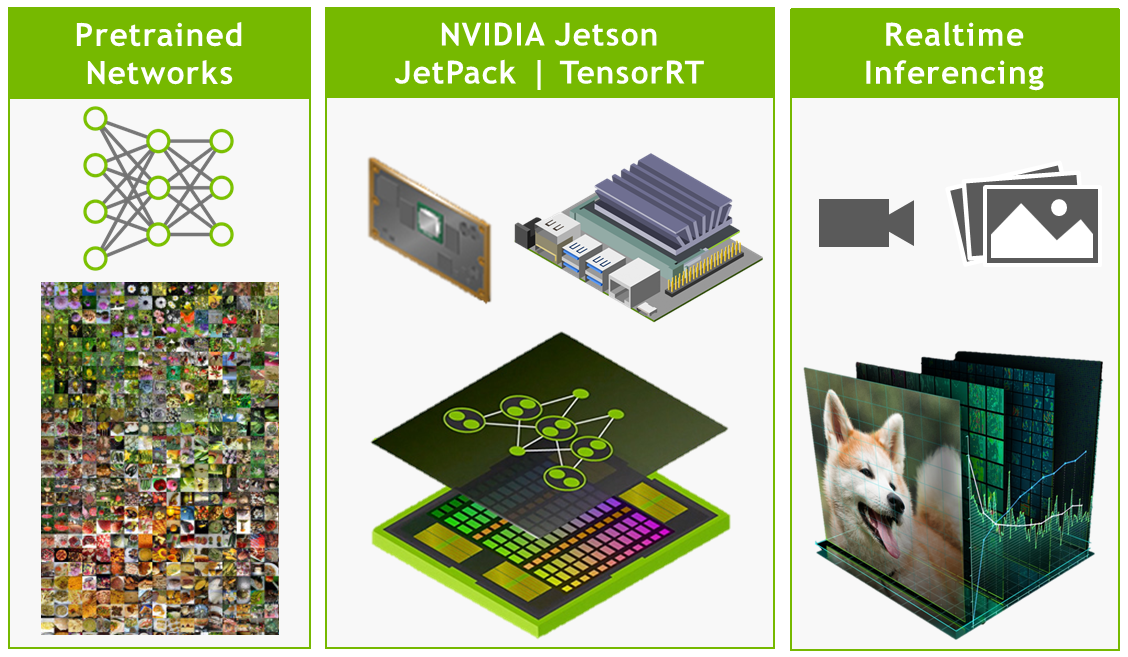

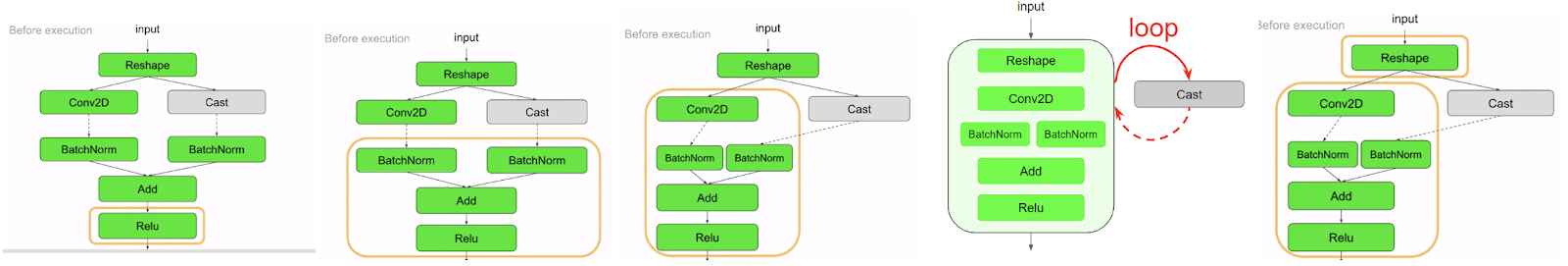

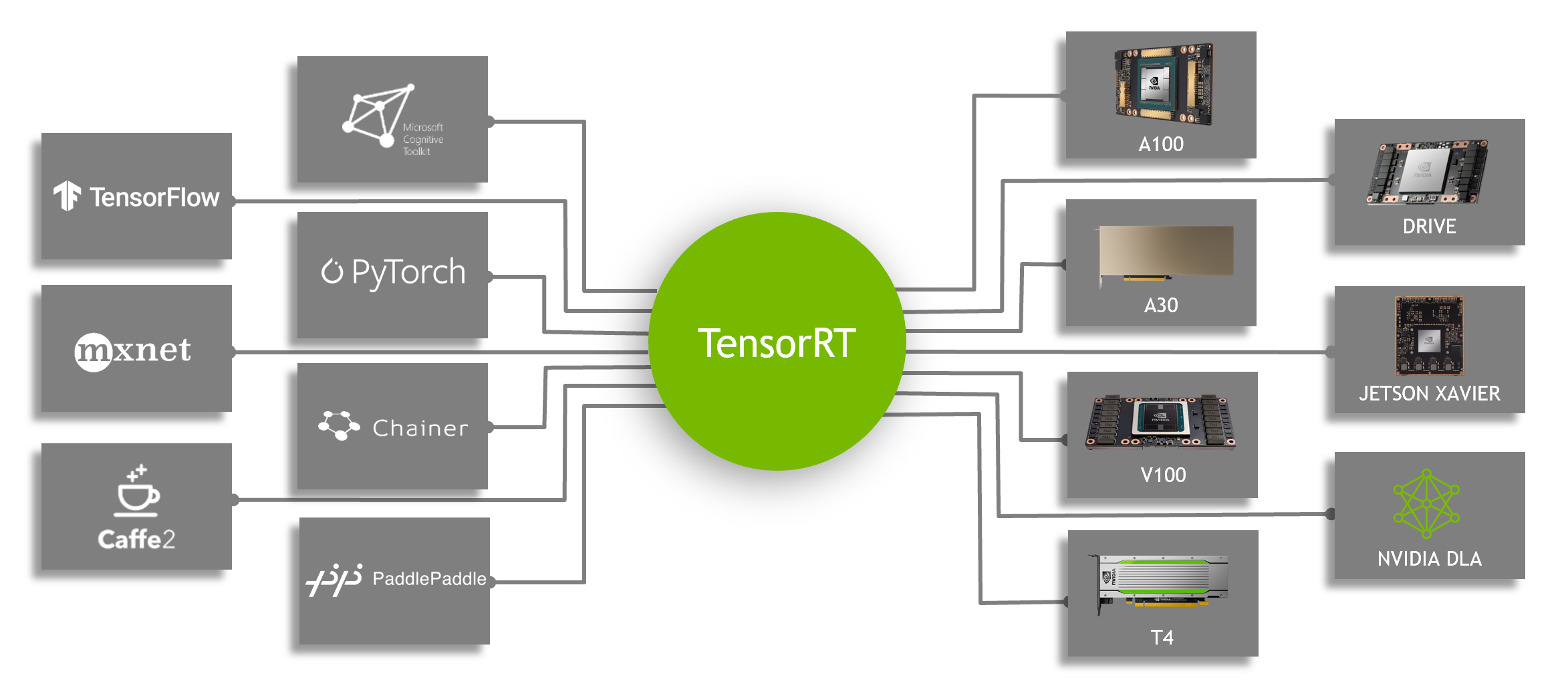

Speeding Up Deep Learning Inference Using TensorFlow, ONNX, and NVIDIA TensorRT | NVIDIA Technical Blog