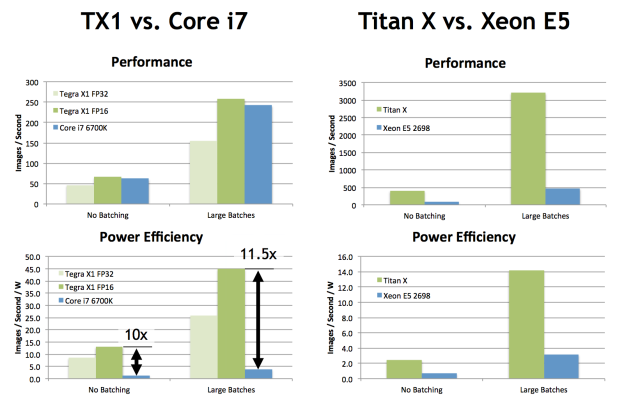

Ο χρήστης Bikal Tech στο Twitter: "Performance #GPU vs #CPU for #AI optimisation #HPC #Inference and #DL #Training https://t.co/Aqf0UD5n7m" / Twitter

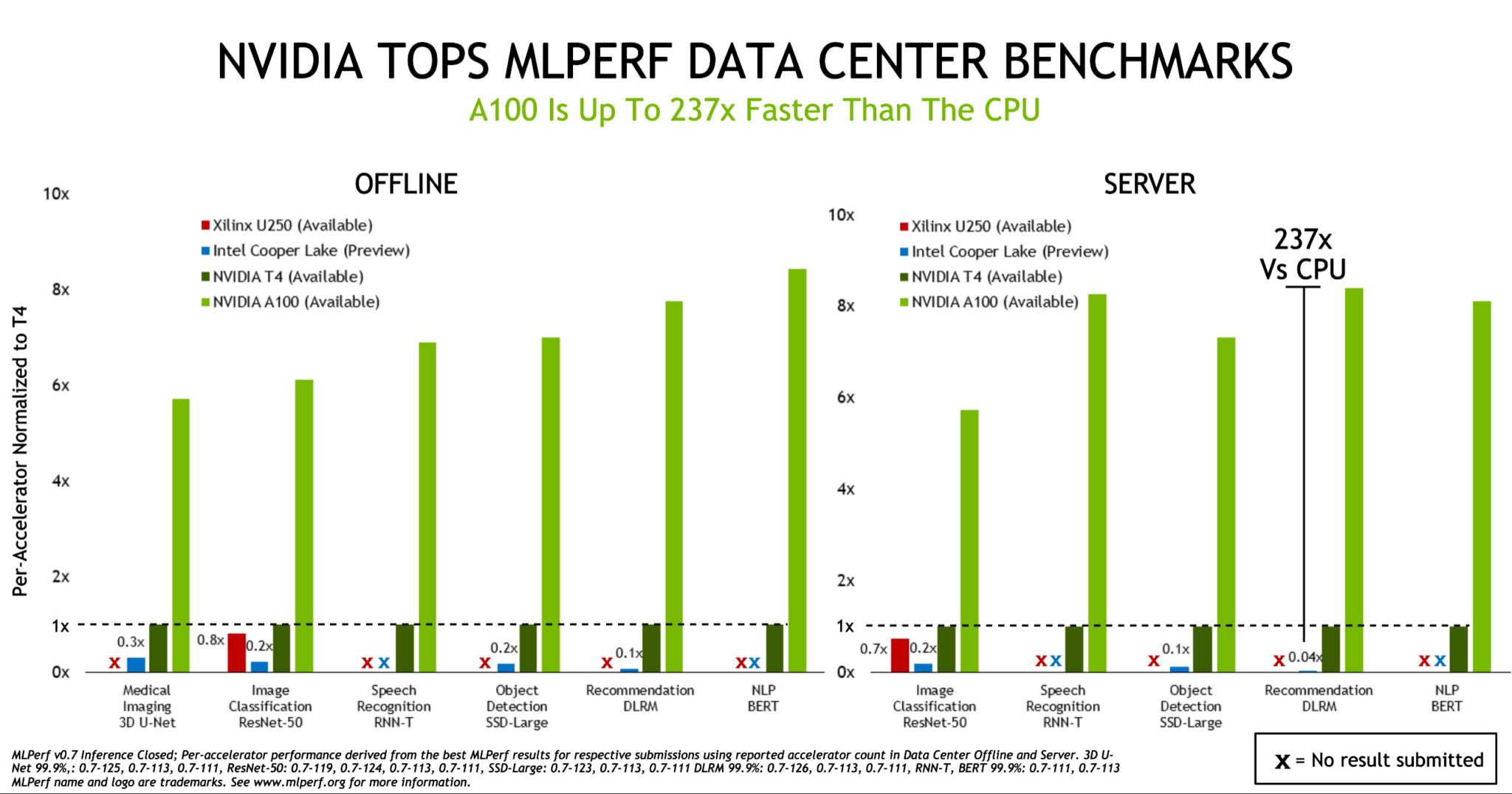

FPGA-based neural network software gives GPUs competition for raw inference speed | Vision Systems Design

DeepSpeed: Accelerating large-scale model inference and training via system optimizations and compression - Microsoft Research

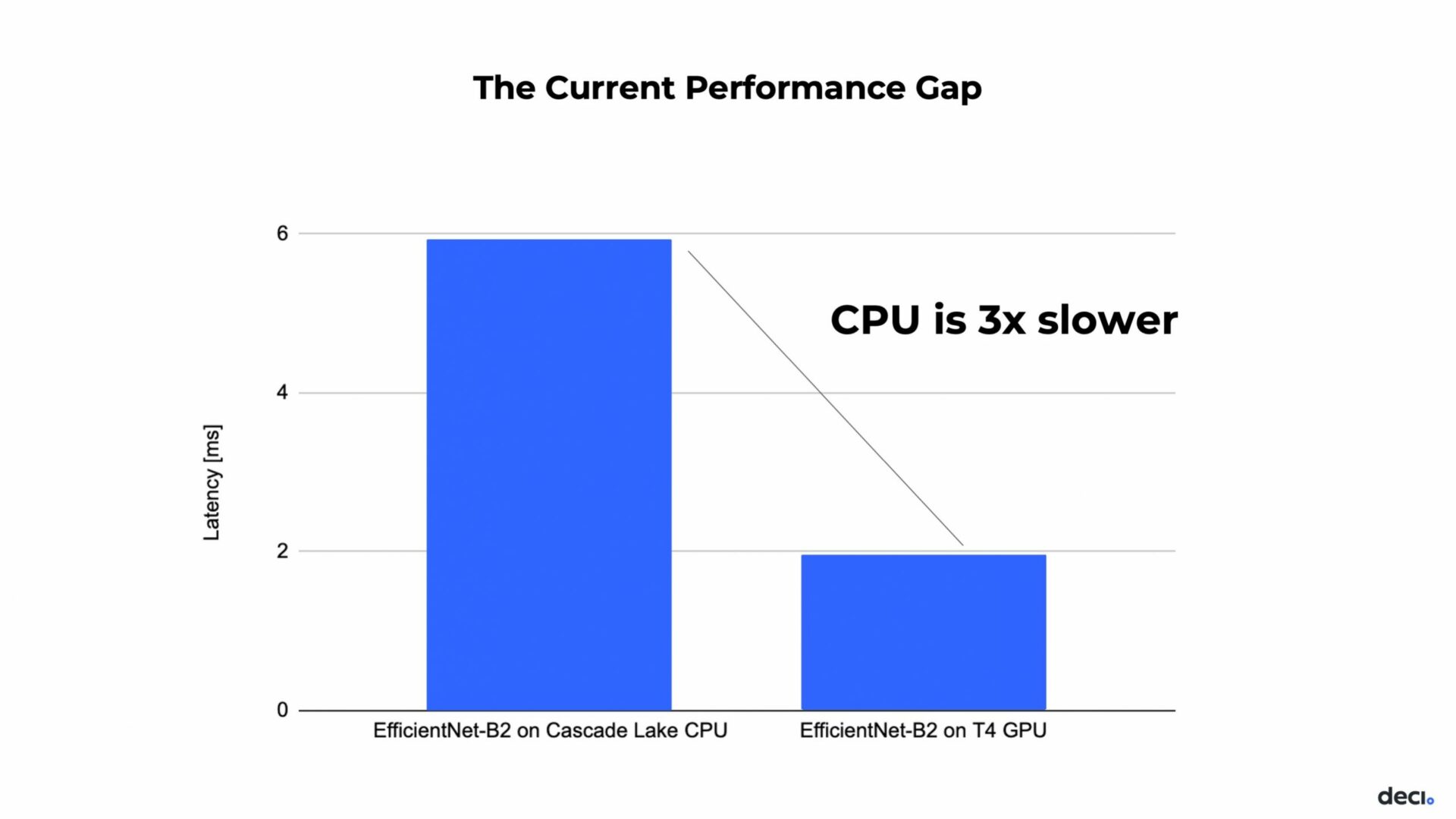

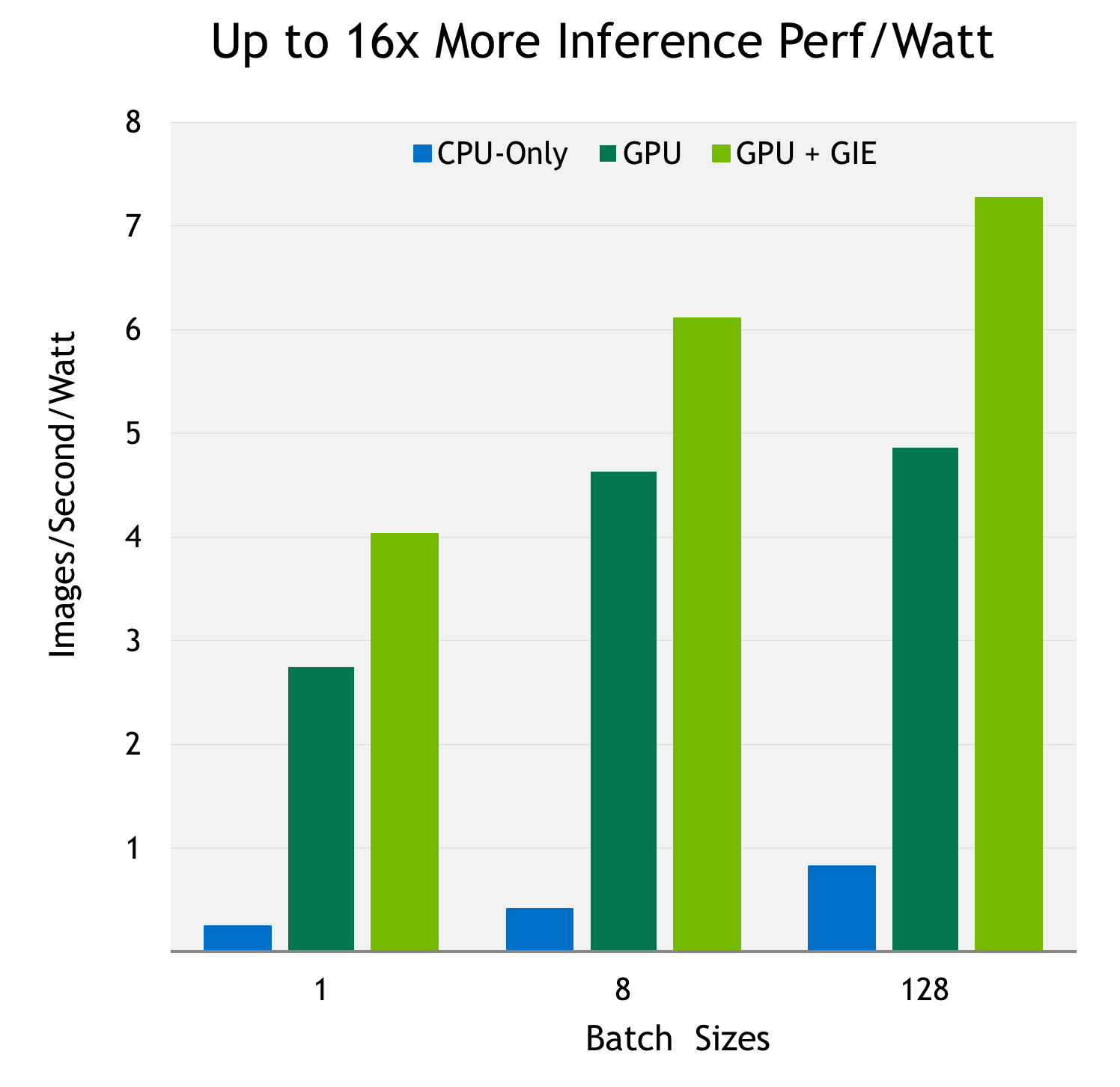

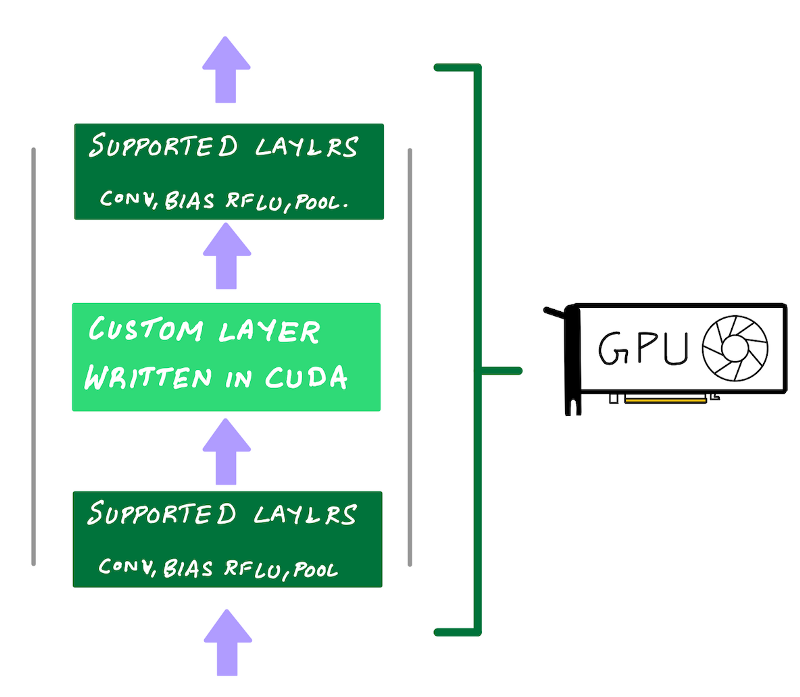

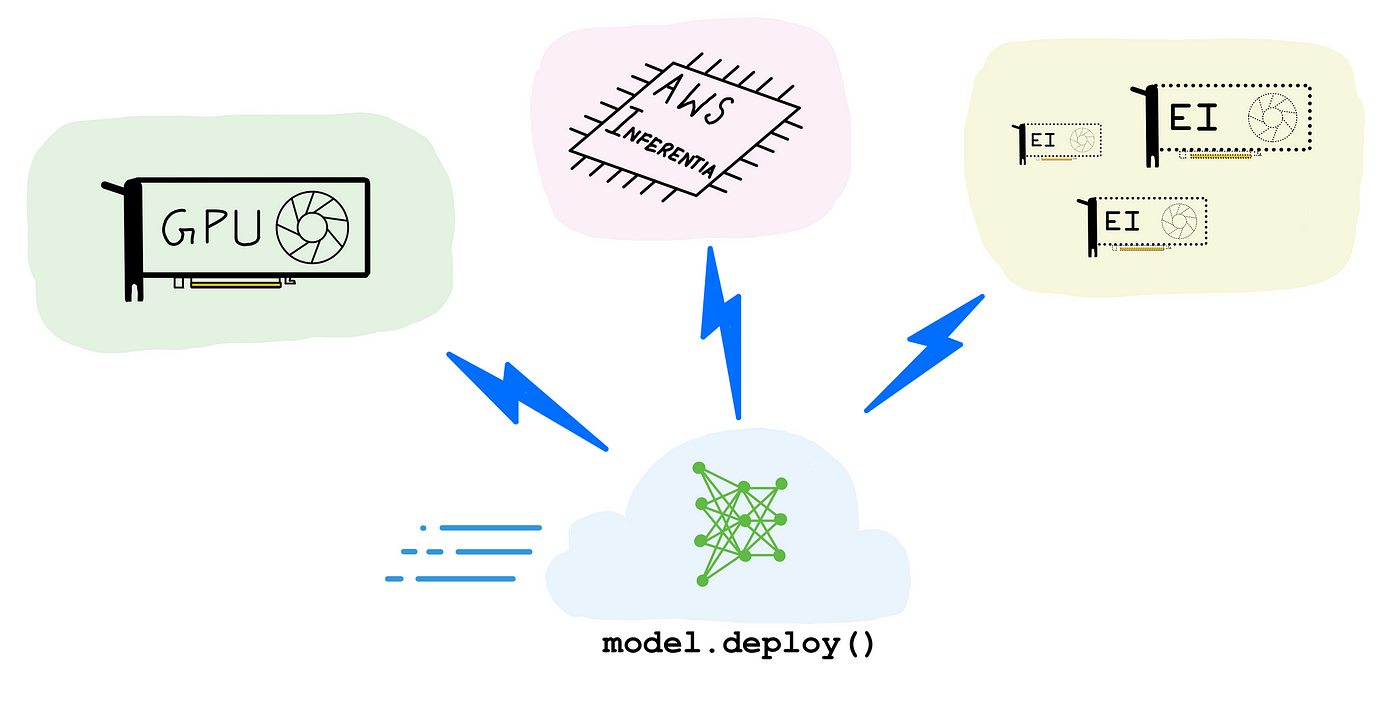

A complete guide to AI accelerators for deep learning inference — GPUs, AWS Inferentia and Amazon Elastic Inference | by Shashank Prasanna | Towards Data Science

A complete guide to AI accelerators for deep learning inference — GPUs, AWS Inferentia and Amazon Elastic Inference | by Shashank Prasanna | Towards Data Science

Sun Tzu's Awesome Tips On Cpu Or Gpu For Inference - World-class cloud from India | High performance cloud infrastructure | E2E Cloud | Alternative to AWS, Azure, and GCP

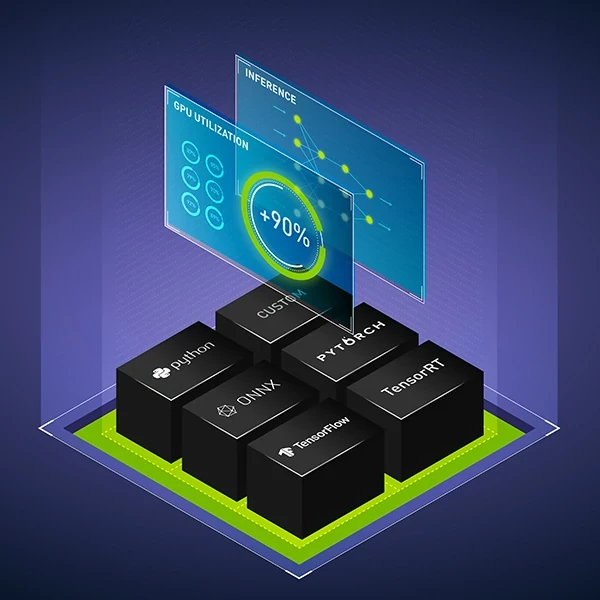

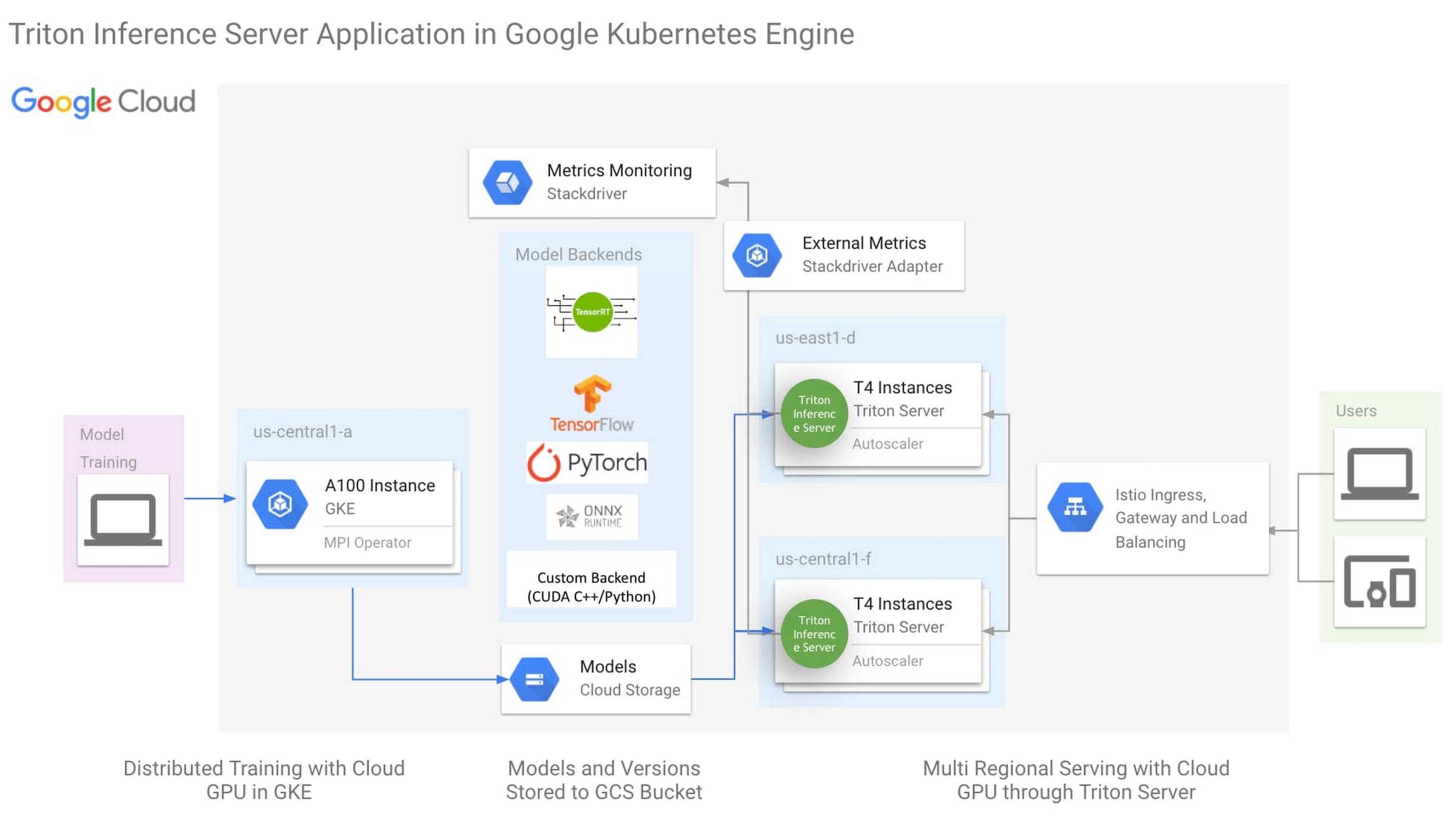

NVIDIA AI on Twitter: "Learn how #NVIDIA Triton Inference Server simplifies the deployment of #AI models at scale in production on CPUs or GPUs in our webinar on September 29 at 10am