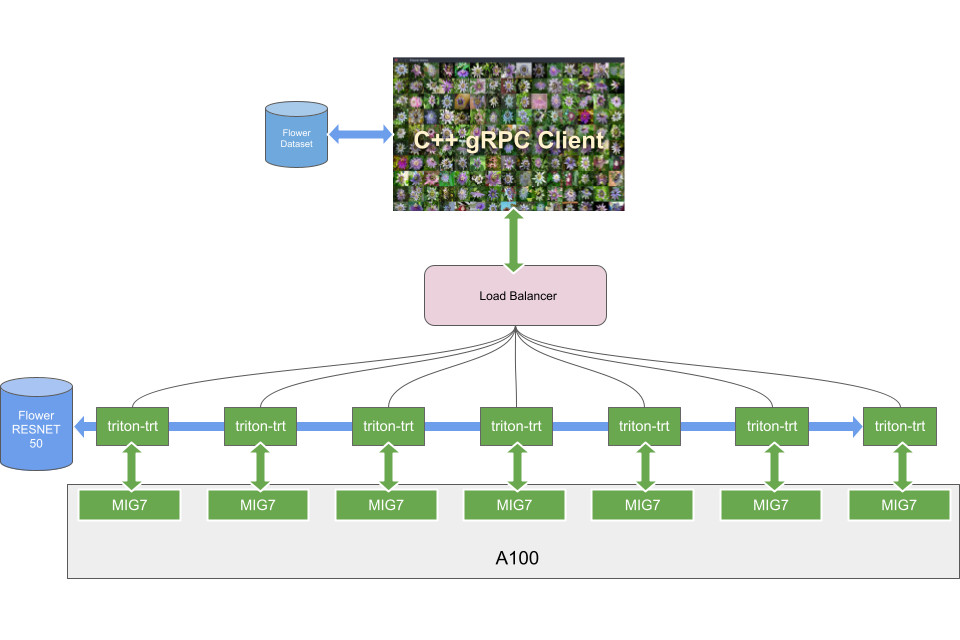

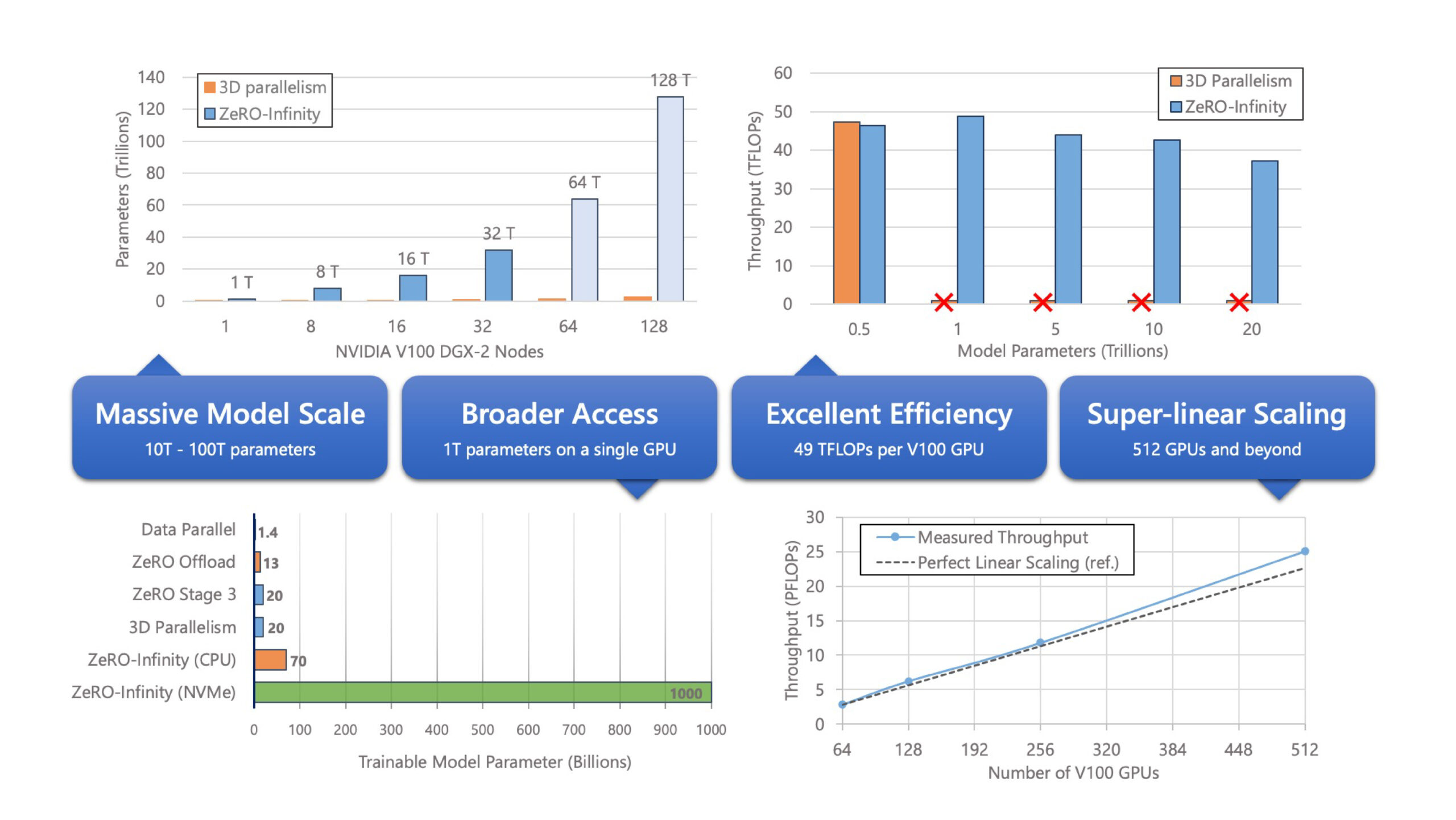

ZeRO-Infinity and DeepSpeed: Unlocking unprecedented model scale for deep learning training - Microsoft Research

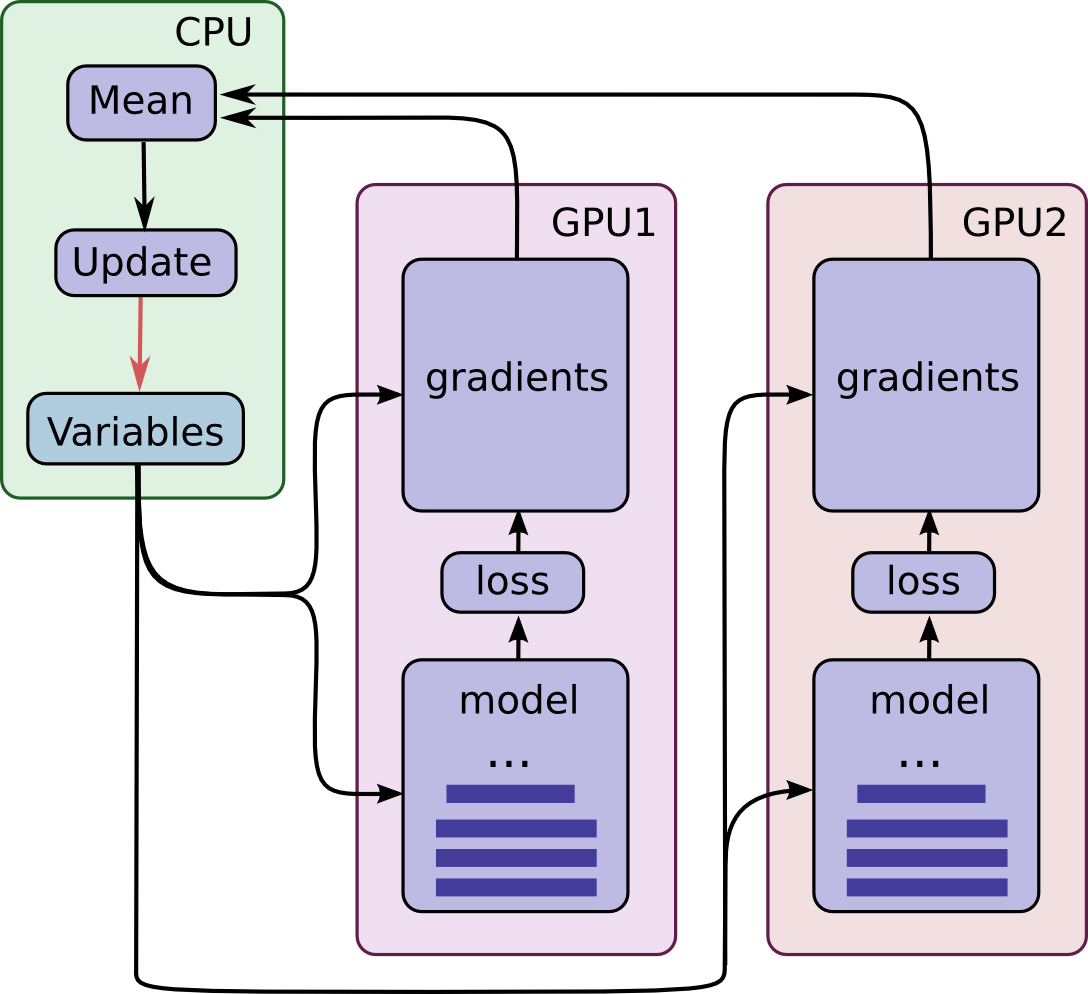

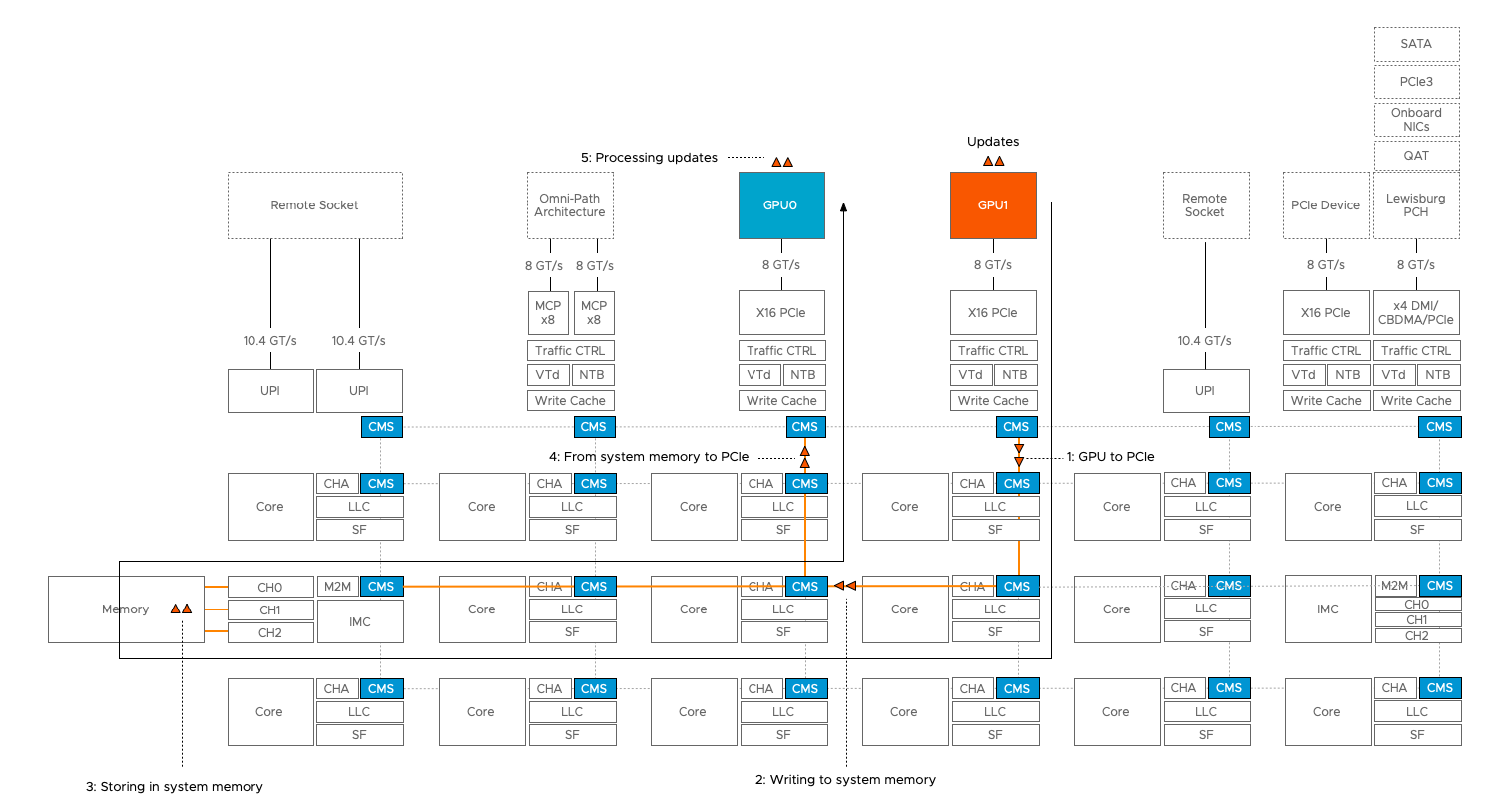

Multi-GPU training. Example using two GPUs, but scalable to all GPUs... | Download Scientific Diagram

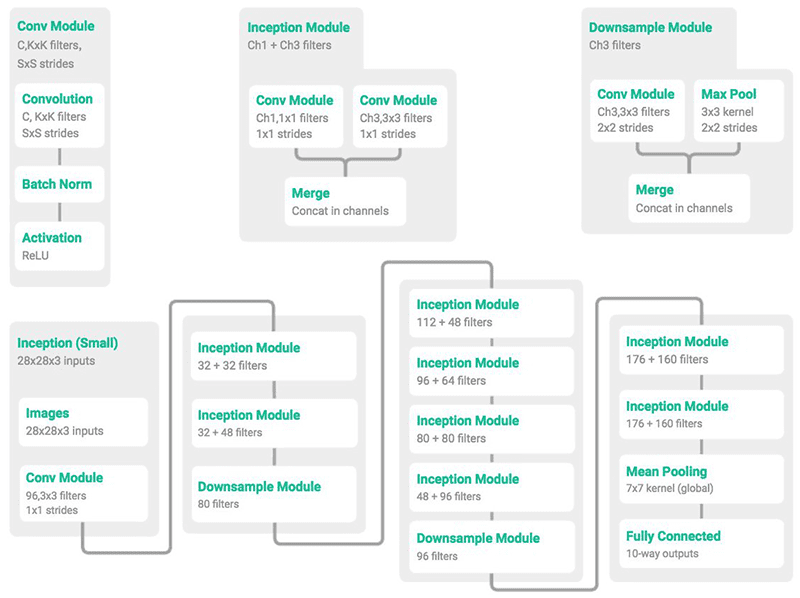

DeepSpeed: Accelerating large-scale model inference and training via system optimizations and compression - Microsoft Research

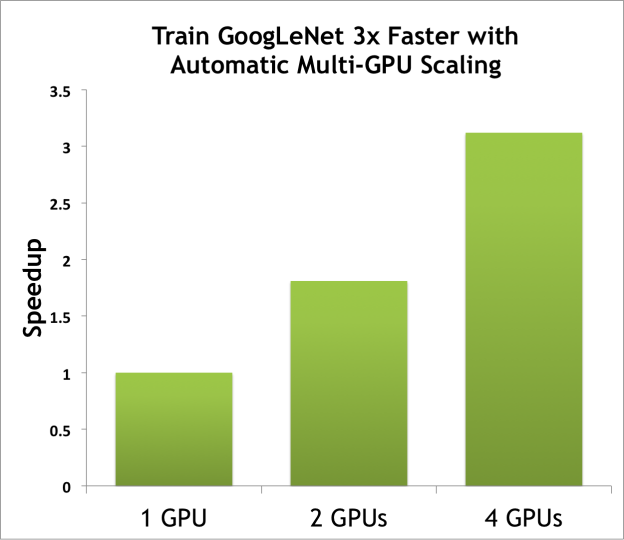

How to Build a Silent, Multi-GPU Water-Cooled Deep-Learning Rig for under $10k | by Mark Palatucci | Medium

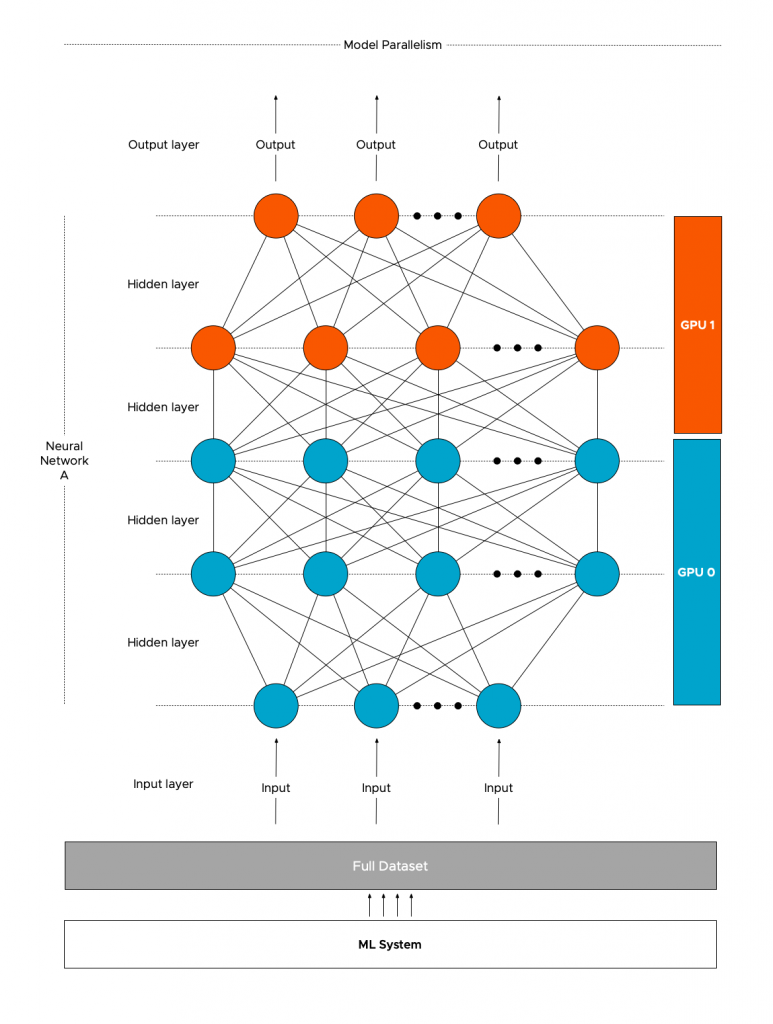

Learn PyTorch Multi-GPU properly. I'm Matthew, a carrot market machine… | by The Black Knight | Medium